Lectures

You can download the lectures here. We will try to upload lectures prior to their corresponding classes.

-

-

Lecture 3 Review III and Constrained Optimization

Lecture 3 Review III and Constrained Optimization

tl;dr: Constrained Optimization

[notes]

Suggested Readings:

- Lecture Notes

-

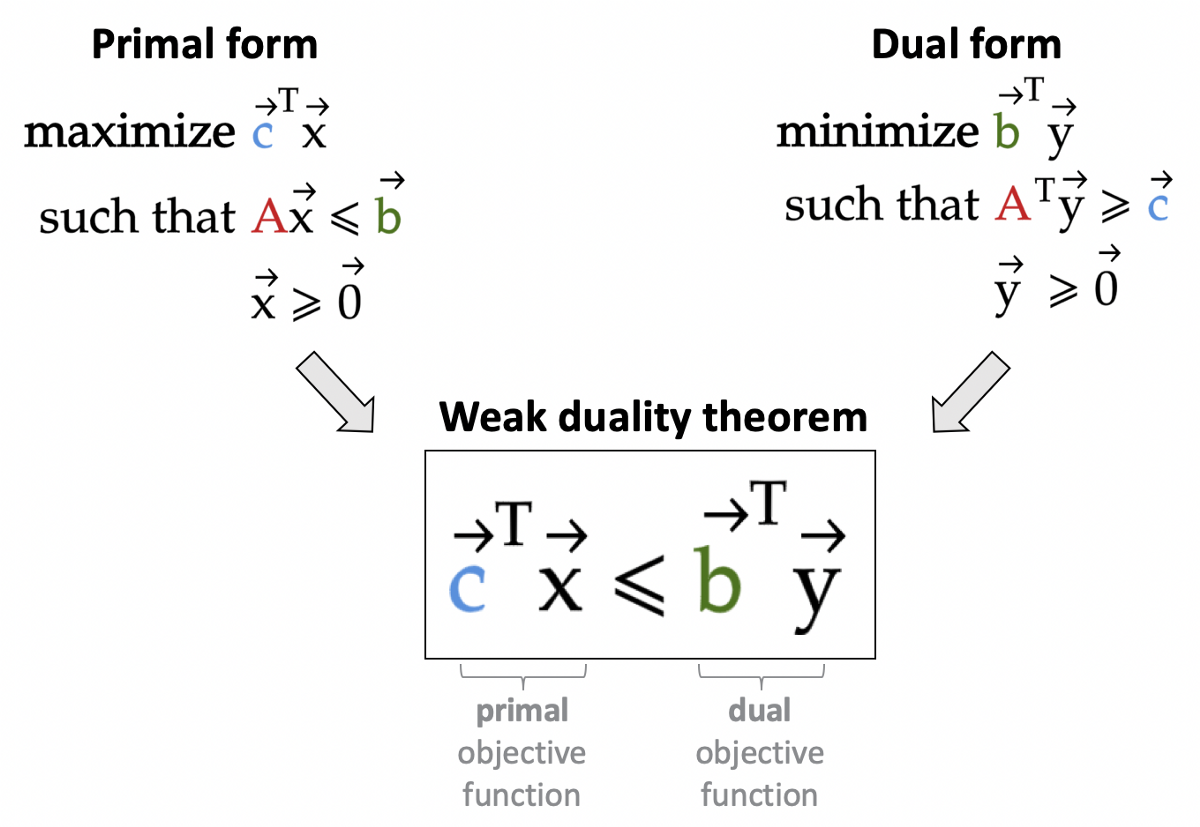

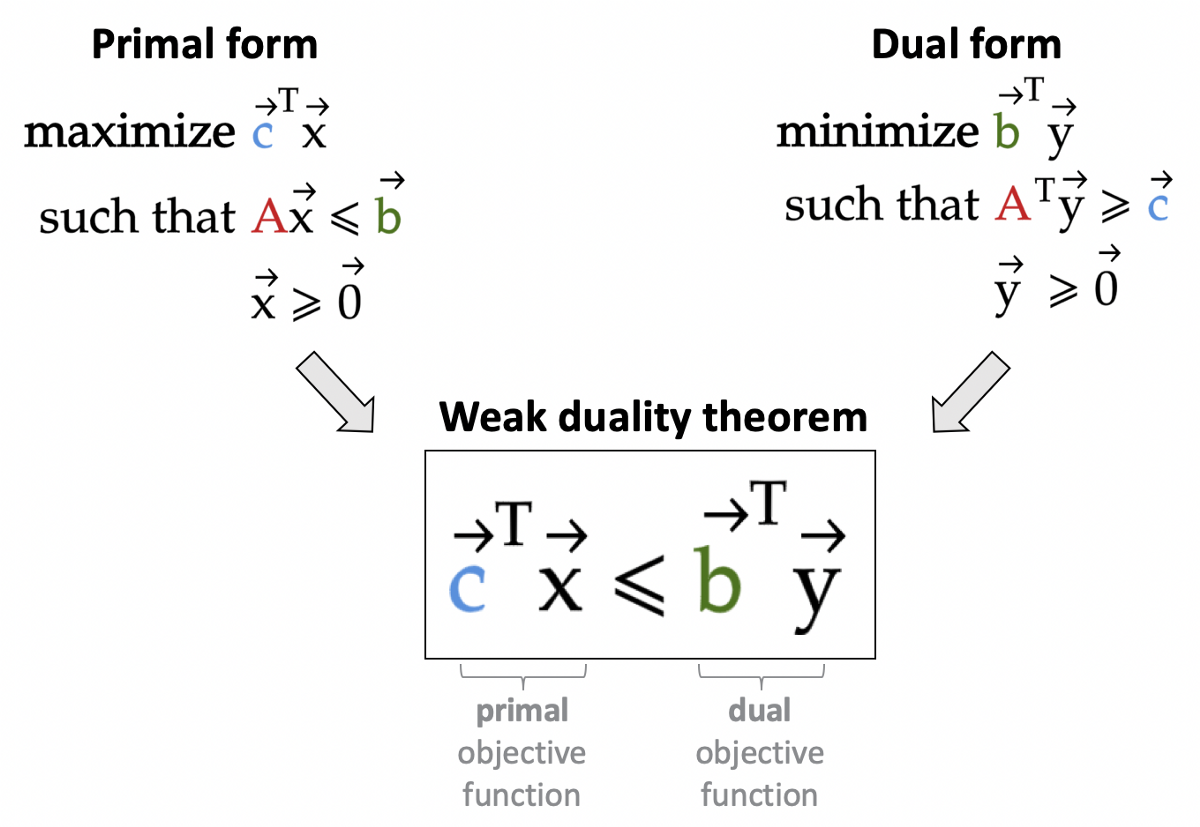

Lecture 5 Linear Programming I

Lecture 5 Linear Programming I

tl;dr: LP

[notes]

Suggested Readings:

Chapter 13 of Numerical Optimization by Jorge Nocedal and Stephen Wright.

-

Lecture 6 LP II and Interior Point Method

Lecture 6 LP II and Interior Point Method

tl;dr: LP

[notes]

Suggested Readings:

Chapter 13 of Numerical Optimization by Jorge Nocedal and Stephen Wright.

-

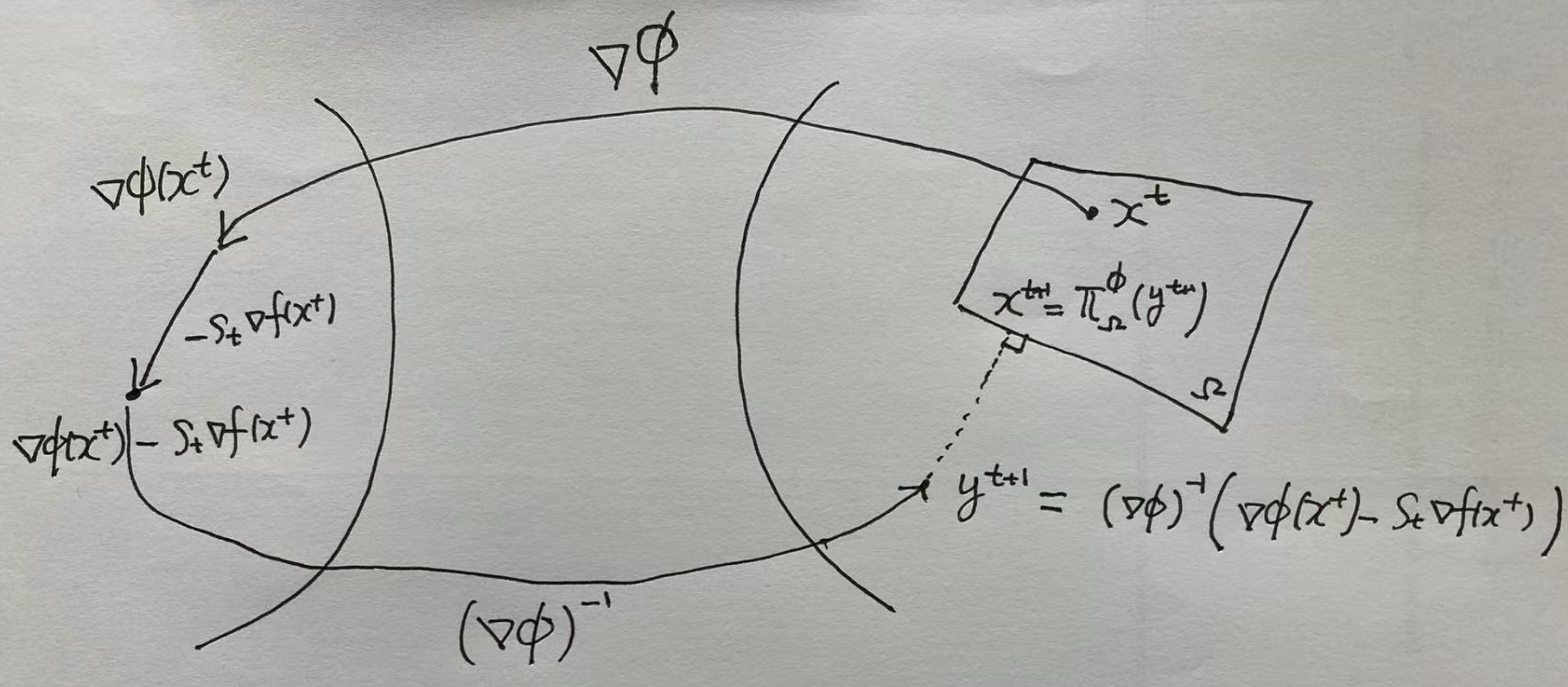

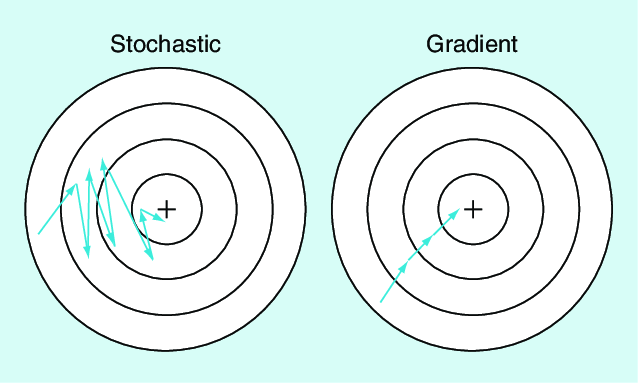

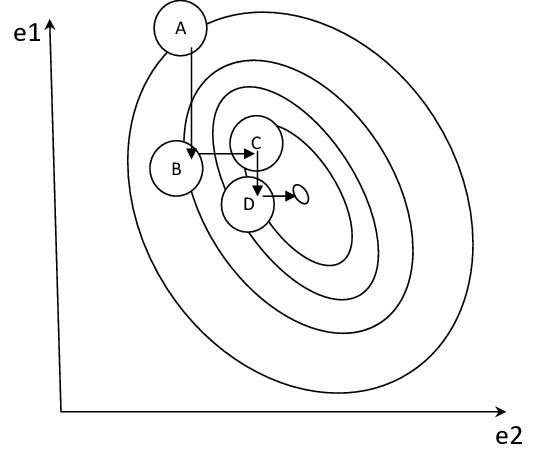

Lecture 7 Mirror Descent

Lecture 7 Mirror Descent

tl;dr: Projected Gradient Descent, Mirror Descent

[notes]

Suggested Readings:

Lecture Notes

-

-

-

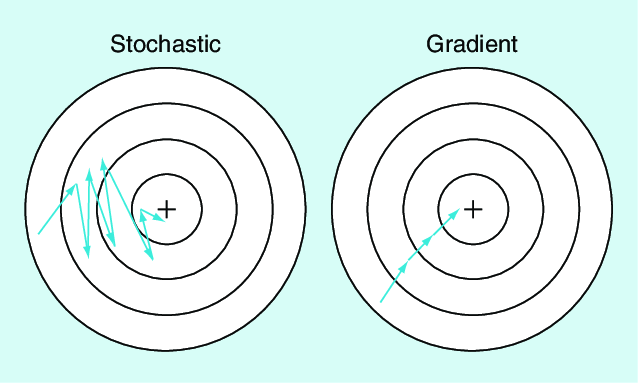

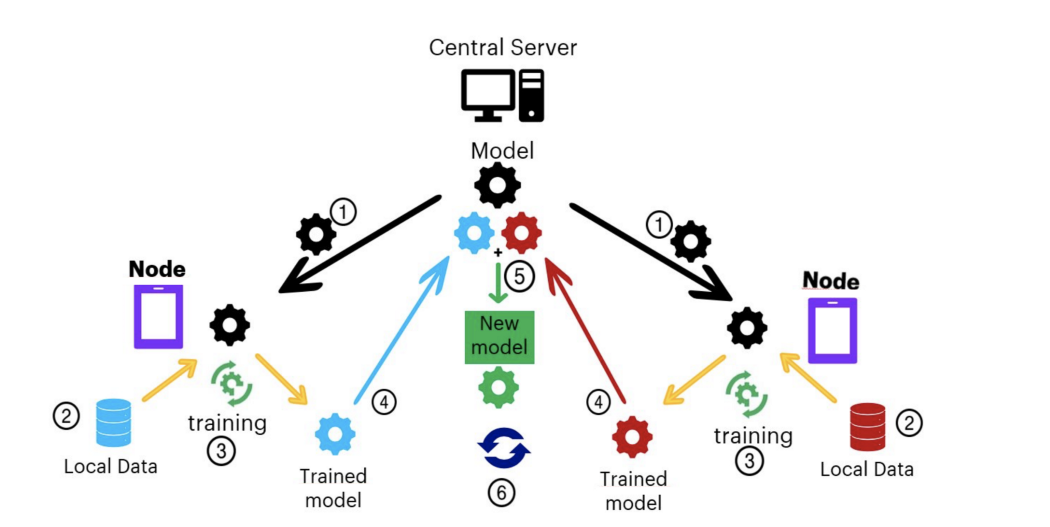

Lecture 10 Stochastic Variance Reduced Gradient

Lecture 10 Stochastic Variance Reduced Gradient

tl;dr: SVRG

[notes]

Suggested Readings:

Johnson, Rie, and Tong Zhang. “Accelerating stochastic gradient descent using predictive variance reduction.” Advances in neural information processing systems 26 (2013): 315-323.

-

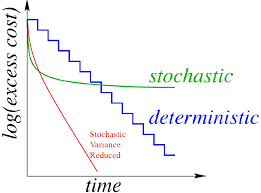

Lecture 11 Federated Optimization

Lecture 11 Federated Optimization

tl;dr: Federated Learning and Federated Optimization

[notes]

Suggested Readings:

Li, Xiang, Kaixuan Huang, Wenhao Yang, Shusen Wang, and Zhihua Zhang. “On the Convergence of FedAvg on Non-IID Data.” In International Conference on Learning Representations. 2019.

Kairouz, Peter, H. Brendan McMahan, Brendan Avent, Aurélien Bellet, Mehdi Bennis, Arjun Nitin Bhagoji, Kallista Bonawitz et al. “Advances and open problems in federated learning.” arXiv preprint arXiv:1912.04977 (2019).

-

Lecture 12 Block Coordinate Descent

Lecture 12 Block Coordinate Descent

tl;dr: BCD

[notes]

Suggested Readings:

Wright, Stephen J. “Coordinate descent algorithms.” Mathematical Programming 151, no. 1 (2015): 3-34.

-

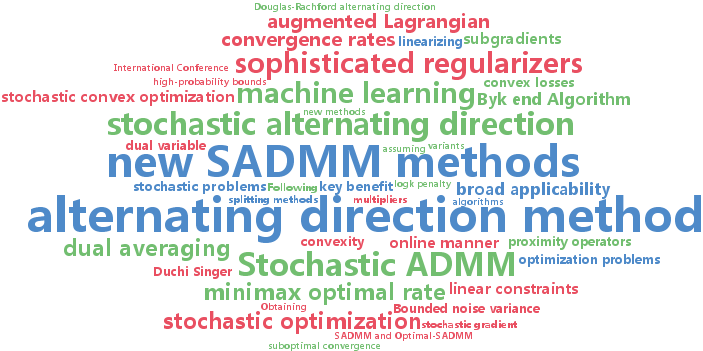

Lecture 13 Alternating Deirection Method of Multipliers

Lecture 13 Alternating Deirection Method of Multipliers

tl;dr: ADMM

[notes]

Suggested Readings:

Boyd, Stephen, Neal Parikh, Eric Chu, Borja Peleato, and Jonathan Eckstein. “Distributed optimization and statistical learning via the alternating direction method of multipliers.” Foundations and Trends in Machine Learning 3, no. 1 (2010): 1-122.

-

Lecture 14 Course Review

Lecture 14 Course Review

tl;dr: Review